By Tao Sauvage

Last year I acquired a Linksys Smart Wi-Fi router, more specifically the EA3500 Series. I chose Linksys (previously owned by Cisco and currently owned by Belkin) due to its popularity and I thought that it would be interesting to have a look at a router heavily marketed outside of Asia, hoping to have different results than with my previous research on the BHU Wi-Fi uRouter, which is only distributed in China.

Smart Wi-Fi is the latest family of Linksys routers and includes more than 20 different models that use the latest 802.11N and 802.11AC standards. Even though they can be remotely managed from the Internet using the Linksys Smart Wi-Fi free service, we focused our research on the router itself.

With my friend @xarkes_, a security aficionado, we decided to analyze the firmware (i.e., the software installed on the router) in order to assess the security of the device. The technical details of our research will be published soon after Linksys releases a patch that addresses the issues we discovered, to ensure that all users with affected devices have enough time to upgrade.

In the meantime, we are providing an overview of our results, as well as key metrics to evaluate the overall impact of the vulnerabilities identified.

Security Vulnerabilities

After reverse engineering the router firmware, we identified a total of 10 security vulnerabilities, ranging from low- to high-risk issues, six of which can be exploited remotely by unauthenticated attackers.

Two of the security issues we identified allow unauthenticated attackers to create a Denial-of-Service (DoS) condition on the router. By sending a few requests or abusing a specific API, the router becomes unresponsive and even reboots. The Admin is then unable to access the web admin interface and users are unable to connect until the attacker stops the DoS attack.

Attackers can also bypass the authentication protecting the CGI scripts to collect technical and sensitive information about the router, such as the firmware version and Linux kernel version, the list of running processes, the list of connected USB devices, or the WPS pin for the Wi-Fi connection. Unauthenticated attackers can also harvest sensitive information, for instance using a set of APIs to list all connected devices and their respective operating systems, access the firewall configuration, read the FTP configuration settings, or extract the SMB server settings.

Finally, authenticated attackers can inject and execute commands on the operating system of the router with root privileges. One possible action for the attacker is to create backdoor accounts and gain persistent access to the router. Backdoor accounts would not be shown on the web admin interface and could not be removed using the Admin account. It should be noted that we did not find a way to bypass the authentication protecting the vulnerable API; this authentication is different than the authentication protecting the CGI scripts.

Linksys has provided a list of all affected models:

- EA2700

- EA2750

- EA3500

- EA4500v3

- EA6100

- EA6200

- EA6300

- EA6350v2

- EA6350v3

- EA6400

- EA6500

- EA6700

- EA6900

- EA7300

- EA7400

- EA7500

- EA8300

- EA8500

- EA9200

- EA9400

- EA9500

- WRT1200AC

- WRT1900AC

- WRT1900ACS

- WRT3200ACM

Cooperative Disclosure

We disclosed the vulnerabilities and shared the technical details with Linksys in January 2017. Since then, we have been in constant communication with the vendor to validate the issues, evaluate the impact, and synchronize our respective disclosures.

We would like to emphasize that Linksys has been exemplary in handling the disclosure and we are happy to say they are taking security very seriously.

We acknowledge the challenge of reaching out to the end-users with security fixes when dealing with embedded devices. This is why Linksys is proactively publishing a security advisory to provide temporary solutions to prevent attackers from exploiting the security vulnerabilities we identified, until a new firmware version is available for all affected models.

Metrics and Impact

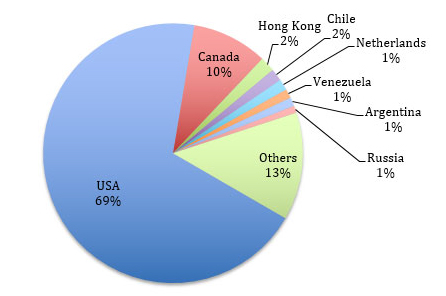

As of now, we can already safely evaluate the impact of such vulnerabilities on Linksys Smart Wi-Fi routers. We used Shodan to identify vulnerable devices currently exposed on the Internet.

We found about 7,000 vulnerable devices exposed at the time of the search. It should be noted that this number does not take into account vulnerable devices protected by strict firewall rules or running behind another network appliance, which could still be compromised by attackers who have access to the individual or company’s internal network.

A vast majority of the vulnerable devices (~69%) are located in the USA and the remainder are spread across the world, including Canada (~10%), Hong Kong (~1.8%), Chile (~1.5%), and the Netherlands (~1.4%). Venezuela, Argentina, Russia, Sweden, Norway, China, India, UK, Australia, and many other countries representing < 1% each.

We performed a mass-scan of the ~7,000 devices to identify the affected models. In addition, we tweaked our scan to find how many devices would be vulnerable to the OS command injection that requires the attacker to be authenticated. We leveraged a router API to determine if the router was using default credentials without having to actually authenticate.

We found that 11% of the ~7000 exposed devices were using default credentials and therefore could be rooted by attackers.

Recommendations

We advise Linksys Smart Wi-Fi users to carefully read the security advisory published by Linksys to protect themselves until a new firmware version is available. We also recommend users change the default password of the Admin account to protect the web admin interface.

Timeline Overview

- January 17, 2017: IOActive sends a vulnerability report to Linksys with findings

- January 17, 2017: Linksys acknowledges receipt of the information

- January 19, 2017: IOActive communicates its obligation to publicly disclose the issue within three months of reporting the vulnerabilities to Linksys, for the security of users

- January 23, 2017: Linksys acknowledges IOActive’s intent to publish and timeline; requests notification prior to public disclosure

- March 22, 2017: Linksys proposes release of a customer advisory with recommendations for protection

- March 23, 2017: IOActive agrees to Linksys proposal

- March 24, 2017: Linksys confirms the list of vulnerable routers

- April 20, 2017: Linksys releases an advisory with recommendations and IOActive publishes findings in a public blog post