The tech industry must manage AI security threats with the same eagerness it has for adopting the new technologies.

New Technologies Bring Old and New Risks

AI technologies are new and exciting, making new use cases possible. But despite the enthusiasm with which organizations are adopting AI, the supply chain and build pipeline for AI infrastructure are not yet sufficiently secure. Business, IT, and cybersecurity leaders have considerable work to do to identify the issues and resolve them, even as they help their organizations streamline adoption in a complex global environment with conflicting regulatory requirements.

Background

As AI technologies become integrated into critical business operations and systems, they become increasingly attractive targets for malicious threat actors who may discover and exploit the new vulnerabilities present in the new technologies. That should reasonably concern any CISO or CIO.

Attackers certainly have plenty of opportunity due to the rapid adoption of AI capabilities. Today businesses use AI to improve their operations, and a Forbes survey notes there is extensive adoption in customer service, customer relationship management (CRM), and inventory management. More use cases are on the way as products mature. In January 2024, global startup data platform Tracxn reported there were 67,199 AI and machine learning startups in the market, joining numerous mature AI companies.

The swift uptick in AI adoption means these new systems have capabilities and vulnerabilities yet to be discovered and managed, which serves as a significant source of latent risk to an organization, particularly when the applications touch so much of an organization’s data.

AI infrastructure encompasses several components and systems that support models’ development, deployment, and operation. These include data sources (such as datasets and data storage), development tools, computational resources (such as GPUs, TPUs, IPUs, cloud services, and APIs), and deployment pipelines. Most organizations source most of the hardware elements from external vendors, who in turn become critical links in the supply chain.

Naturally, anything a business depends on needs to be protected, and security has to be built in. Risks and mitigation options should be identified early across the full stack of hardware, software, and services supply chain to manage risks as well as anticipate and defend against threats.

Also, any new foundational elements in an organization’s infrastructure create new complexities; as Scotty pointed out in Star Trek III: The Search for Spock, “The more they overthink the plumbing, the easier it is to stop up the drain.”

The Frailties in the AI Build Pipeline

To coin another apt movie quote, “With great power comes great responsibility.” AI offers tremendous power – accompanied by new security concerns, many yet to be identified.

It shouldn’t only be up to technical staff to uncover the risks associated with integrating AI solutions; both new and familiar steps should be taken to address the risks inherent in AI systems. The build pipeline for AI typically involves several stages, often in iteration, similar to DevOps and CI/CD pipelines. The AI world includes new deployment teams: AIOps, MLOps, LLMOps, and more. While these new teams and processes may have different names, they perform common core functions.

Broadly, attack vectors can be found in four major areas:

- Development: Data scientists and developers write and test code for model libraries. Data is collected, cleaned, and prepared for training. Some data may come from third parties or be generated for a vertical market. Applications are built based on these models, with the goal of improving data analysis so that people can make better decisions.

- Training: The AI models are trained using the collected datasets, which depend on complex algorithms and use substantial computational power. The organization or its external provider validates and tests Large Language Models (LLMs) and others to meet quality and performance criteria.

- Deployment: The organization deploys the application and data models to production environments. This may involve several DevOps practices, such as containerization (such as Docker), orchestration (such as Kubernetes), and application integration schemes (such as APIs and microservices).

- Monitoring and maintenance: As with any other enterprise system, the software supply chain for AI systems requires performance monitoring and the standard complement of updates and patches. AI systems add more to the list, such as model performance monitoring.

What Could Possibly Go Wrong?

What Couldn’t?

Security professionals are trained to see the weak points in any system, and the AI supply chain and build pipeline are no exception. Attack surface is present at each step in the AI build pipeline, adding to the usual areas of concern in software development and deployment.

Poisoning the Data

The most exposed element is the data itself.“Garbage in, garbage out” is an old tenet of computer science that describes no amount of processing can turn garbage data into useful information. Worse outcomes are a consequence of an intentional effort to degrade the dataset, especially when that degradation is surreptitious, subtle, and impactful. Malicious data injected into training datasets can corrupt or bias AI models to create misleading outputs, intentionally generating incorrect predictions or decisions. Over time, malicious actors will be motivated to develop more sophisticated techniques to evade detection and to poison larger datasets, including the third-party data on which many IT systems rely.

An attacker who could gain from compromising model integrity might inject corrupted data into a training database or hack the data collection tools to insert biased data intentionally. They may craft malicious prompts to mislead LLMs into suggesting inaccurate outputs, comparable to the way Twitter bots affect trending topics.

While the term “poisoning” might suggest a deliberate intent to manipulate data and affect the model’s output, much like an intentional backdoor coded into a program, bias can also be introduced by accident, like an unintentional coding error that results in a bug that could be exploited by a threat actor. IOActive previously identified bias resulting from poor training data set composition in facial recognition in commercially available mobile devices. The presence of these unintentional biases makes the detection and response to poisoning more complex and resource intensive.

Many LLMs are trained on massive data oceans culled from the public internet, and there is no realistic way to separate the signal from the noise in those datasets. Scraping the internet is a simple and efficient way to access a large dataset, but it also carries the risk of data poisoning, whether deliberate or incidental.

While unintended poisoning is a known and accepted problem – compounded by the fact that LLMs trained on public datasets are now ingesting their own output, some of which is incorrect or nonsensical – deliberate data poisoning is much more complicated. The use of public datasets enables anyone, including malicious actors, to contribute to them and poison them in any number of ways, and there’s not much that LLM designers can do about it. In some cases, this recursive training with generated data can result in model collapse, which offers an intriguing new attack impact for malicious threat actors.

This will, at minimum, add to the burden of the AI training process. Database, LLM, and application testing needs to expand beyond “Does it work?” and “Is its performance acceptable?” to “Is it safe?” and “How can we be sure of that?”

Example: DeepSeek’s Purposeful Ideological Bias

In some cases, there is obvious ideological bias purposefully introduced into models to comply with local regulations that further the ideological and public relations goals of the controlling authority. Companies operating under repressing regimes have no choice but to produce intentionally flawed LLMs that are politically indoctrinated to comply with the local legal requirements and worldview.

Many companies and investors experienced shock, when news of DeepSeek’s training and inference costs were widely disseminated in January 2025. As numerous people evaluated the DeepSeek model, it became clear that it adhered to the People’s Republic of China (PRC) propaganda talking points, which come directly from the carefully cultivated worldview of the Chinese Communist Party (CCP). DeepSeek had no choice in falsifying the facts related to events like the Tiananmen Square Massacre, repression of the Uyghurs, the coronavirus pandemic, and the Russia Federation’s invasion of Ukraine.

While these examples of censorship may not seem like an immediate security concern, organizations integrating LLMs into critical workflows should consider the risks of relying on models controlled by entities with heavy-handed content restrictions.

If an AI system’s outputs are influenced by ideological filtering, it could impact decision-making, risk assessments, or even regulatory compliance in unforeseen ways. Dependence on such models introduces a layer of opaque external control, which could become a security or operational risk over time.

Failing to Apply Access Controls

Not every security issue is due to ill intent, but while ignorance is more common than malice, it can be just as dangerous.

Imagine a scenario where a global organization builds an internal AI solution that handles confidential data. For instance, the tool might enable staff to query its internal email traffic or summarize incoming emails. Building such a system requires fine-tuned access control. Otherwise, with a bit of clever prompt engineering or random dumb luck, the AI model would cheerfully display inappropriate emails to the wrong people, such as personal employee data or the CEO’s discussion of a possible acquisition.

That’s absolutely a privacy and security vulnerability.

Risks From AI-enabled Third-party Products

Most application and cloud service products – including many security products – now include some form of AI features, from a simple chatbot on a SaaS platform to an XDR solution backed by deep AI analysis. These features and their associated attack surface are present even if they add zero value and an unwanted by the customer.

While AI-based features potentially offer greater efficiency and insights for security teams, the downside is that customers have little or no insight into the functioning, risks, and impacts from AI systems, LLMs, and the foundational models those products incorporate. The opacity of these products is a new risk factor that enterprises need to be aware of and take into account when assessing whether to implement a given solution.

While quantifying that risk is difficult, if not impossible, it’s vital that enterprise teams perform risk assessments and get as much information from vendors as possible about their AI systems.

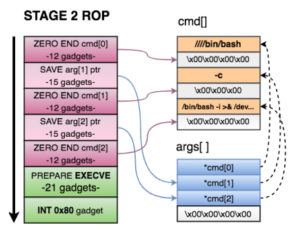

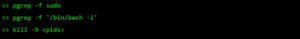

Compromising the Deployment Itself

Malicious threat actors can target software dependencies, hardware components, or integration points. Attacks on CI/CD pipelines can introduce malicious code or alter AI models to generate backdoors in production systems. Misconfigured cloud services and insecure APIs can expose AI models and data to unauthorized access or manipulation.

These are largely commonplace issues across any enterprise application system. However, given the newness of AI software libraries, for instance, it is unwise to rely on the sturdiness of any component. There’s a difference between “nobody has broken in yet” and “nobody has tried.” Eventually, someone will try and succeed.

A malicious AI attack could also be achieved through unauthorized API access. It isn’t new for bad actors to exploit API vulnerabilities, and in the context of AI applications, attacks like SQL injection can wreak widespread havoc.

These are just a few of the possibilities. Additional vulnerabilities to consider:

- Model Extraction[1][2][3][4]

- Reimplementing an AI model using reverse engineering

- Conducting a privacy attack to extract sensitive information by analyzing the model outputs and inferring the training data based on those results

- Embedding a backdoor in the training data, which is triggered after deployment

While it’s difficult to know which attack vectors to worry about most urgently today, unfortunately, bad actors are as innovative as any developers.

Devising an AI Infrastructure Security Plan

To address these potential issues, organizations should focus on understanding and mitigating the attack surfaces, just as they do with any other at-risk assets.

Two major tools for better securing the AI supply chain are MITRE ATLAS and AI Red Teaming. These tools can work in combination with other evolving resources, including the US National Institute of Standards (NIST) Artificial Intelligence Risk Management Framework (AI RMF) and supporting resources.

MITRE ATLAS

The non-profit organization MITRE offers an extension of its MITRE ATT&CK framework, the Adversarial Threat Landscape for Artificial-Intelligence Systems (ATLAS). ATLAS includes a comprehensive knowledgebase of the tactics, techniques, and procedures (TTPs) that adversaries might use to compromise AI systems. These offer guidance in threat modeling, security planning, and training and awareness. The newest version boasts enhanced detection guidance, expanded scope for industrial control system assets, and mobile structured detections.

ATLAS maps out potential attack vectors specific to AI systems like those mentioned in this post, such as data poisoning, model inversion, and other adversarial examples. The framework aids in identifying vulnerabilities within the AI models, training data, and deployment environments. It’s also an educational tool for security professionals, developers, and business leaders, providing a framework to understand AI systems’ unique threats and how to mitigate them.

ATLAS is also a practical tool for secure development and operations. Its guidance includes securing data pipelines, enhancing model robustness, and ensuring proper deployment environment configuration. It also outlines detection practices and procedures for incident response should an attack occur.

AI Red Teaming

AI Red Team exercises can simulate attacks on AI systems to identify vulnerabilities and weaknesses before malicious actors can exploit them. In their simulations, Red Teams use techniques similar to real attackers’, such as data poisoning, model manipulation, and exploitation of vulnerabilities in deployment pipelines.

These simulated attacks can uncover weaknesses that may not be evident through other testing methods. Thus, AI Red Teaming can enable organizations to strengthen their defenses by implementing better data validation processes, securing CI/CD pipelines, strengthening access controls, and similar measures.

Regular Red Team exercises provide ongoing feedback, allowing organizations to continuously improve their security posture and adapt to evolving threats in the AI landscape. It’s also a valuable training tool for security teams, helping them improve their overall readiness to respond to real incidents.

Facing the Evolving Threat

As AI/ML technology continues to evolve and is used in new applications, new attack vectors, vulnerabilities, and risks will be identified and exploited. Organizations who are directly or indirectly exposed to these threats must expend effort to identify and manage these risks, working to mitigate the potential impact from the exploitation of this new technology.

[1] https://paperswithcode.com/task/model-extraction/codeless

[2] https://dl.acm.org/doi/fullHtml/10.1145/3485832.3485838

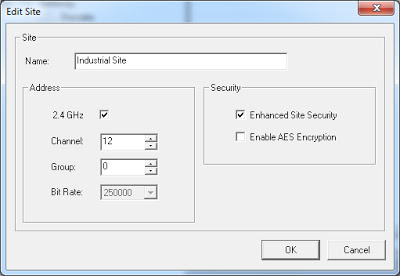

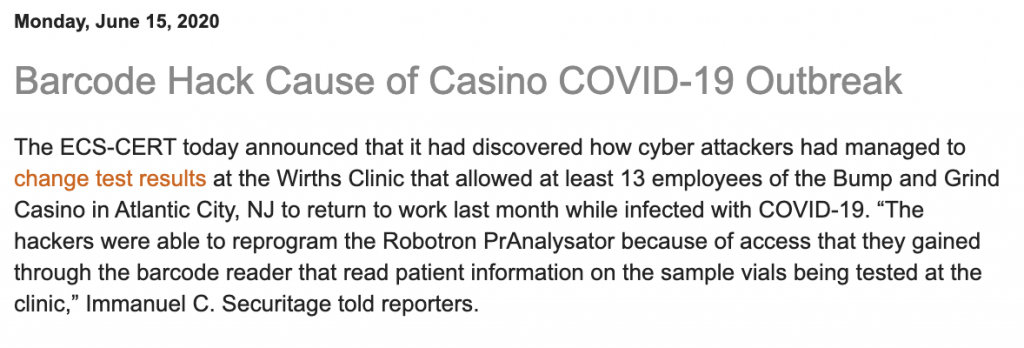

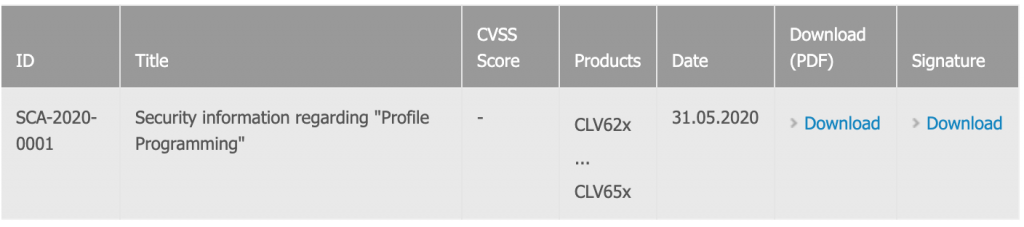

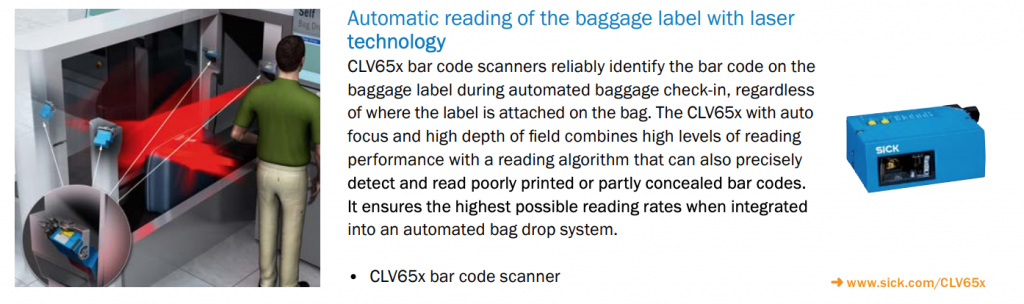

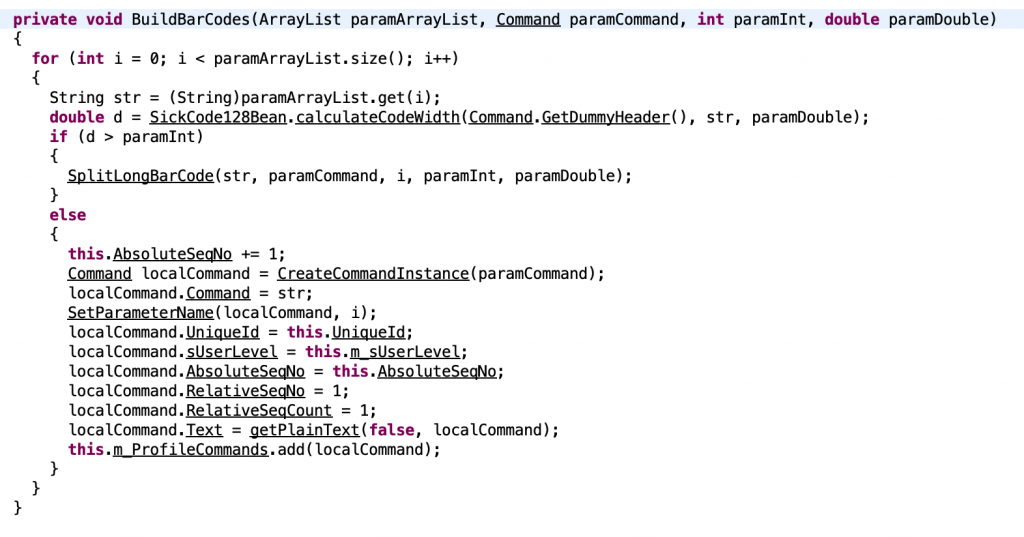

Analyzing CLV65x_V3_10_20100323.jar and CLV62x_65x_V6.10_STD

Analyzing CLV65x_V3_10_20100323.jar and CLV62x_65x_V6.10_STD